Deep-Dive into BitMind

Democratizing the Development of AI (Starting with Deepfake Detection)

We are in a crisis.

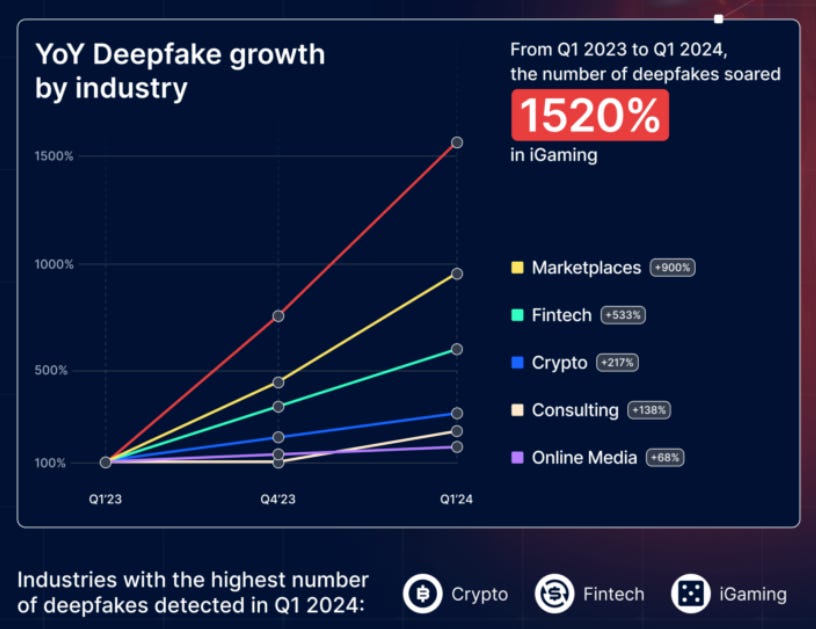

The threat of AI-generated media has reached an inflection point. The recent releases of OpenAI's Sora and Google's Veo demonstrate how text-to-video generation has progressed from a theoretical capability to a commercial reality. Other companies like Runway, KLING AI, Hedra, and LUMA have also released increasingly sophisticated tools that make high-quality image and video manipulation accessible to anyone with an internet connection, dramatically lowering the technical and resource-intensive barriers to creating convincing deepfakes.

Recent events highlight the tangible threat this technology poses to democratic processes, public trust, and financial security:

In January 2024, thousands of New Hampshire voters received a robocall featuring a deepfake of President Biden's voice telling them not to vote in the upcoming primary election. This led the FCC to declare AI-generated robocalls illegal under federal telecommunications law (NPR, 2024).

In Slovakia's 2023 election, a sophisticated deepfake audio emerged just days before voters went to the polls, appearing to show one candidate discussing vote rigging and raising beer prices. The pro-Western party ultimately lost to a pro-Russian politician, with experts suggesting the timing and nature of the deepfake may have impacted the election outcome (NPR, 2024).

In Hong Kong, a finance worker was tricked into remitting $25.6M (USD) after participating in a video conference call where deepfake technology was used to convincingly recreate multiple company executives, including the CFO, demonstrating how sophisticated these scams have become (CNN, 2024).

According to Deloitte, AI-generated content contributed to more than $12B in fraud losses last year alone, with projections suggesting these losses could reach $40B in the U.S. by 2027, highlighting the growing financial impact of deepfake scams (CBS News, 2024).

The current technical gap in detection capabilities is particularly concerning. While there are hundreds of thousands of deepfake detection models leveraging techniques including CNNs, LSTMs, Two-Stream Networks, and GANs, most have limited effectiveness (a 70% detection rate is considered good in the industry) especially when lighting conditions, facial expressions, or audio quality differ from training data. The asymmetry between the rapid proliferation of AI-generated media and the ability to identify them needs to be addressed - we need to be more than 70% confident in knowing what's real and what isn't.

BitMind is building a solution.

Founded in 2024 with $750,000 of funding to date (led by Canonical, with participation from the NEAR Foundation), BitMind is developing an orchestration layer that accelerates the deployment of AI models across decentralized networks, with their flagship product being a decentralized deepfake detection system built on the Bittensor network (Subnet 34). BitMind seeks to democratize deepfake detection through a combination of the following:

BitMind Subnet 34

BitMind Agent Smith Browser Extension: Chrome extension to flag fake images on the web (from my experience, this works very well)

BitMind ID: Drag and drop any image to get fast deepfake detection results

X Bot: Verify if images on X are real by tweeting at the bot

Discord Bot: Verify if images are real by sending on Discord

API services

3 Open-source models on GitHub

40+ open-source datasets

Novel Approach: At a high level, BitMind's solution leverages a multi-model framework called Content-Aware Model Orchestration (CAMO) to detect deepfakes at ~91% (up to 95-96% accuracy in some scenarios). This approach utilizes two core model architectures, as simply described below:

NPR (Neighboring Pixel Relationships): AI model that identifies unnatural patterns between neighboring pixels that typically appear in AI-generated images but not in real photos (think of how an art expert might spot brush strokes that reveal a painting is forged)

UCF (Universal Content Framework): AI model that analyzes images by breaking them down into fundamental characteristics, identifying common signs of AI manipulation (like unnatural textures or lighting) that appear across different Gen-AI tools, even if those tools create fakes in different ways.

The combination of these two approaches allows BitMind to achieve higher accuracy than either method could achieve alone. CAMO's intelligent content routing system directs various types of content to specialized detection models - for instance, routing face-containing images to a dedicated face expert model while sending other content to a generalist model. This specialized approach is powered by extensive training on:

14M+ annotated real-world images from ImageNet (varying in size and variety - not limited to faces)

70,000 high-quality images from FFHQ (human faces created by NVIDIA Research, varying in age, ethnicity, and backgrounds)

Specialized synthetic "mirror" datasets generated in-house (these are manipulated using various AI-image-generating tools like SDXL and Flux on real images)

BitMind validates their models through their proprietary Deepfake Detection Arena (DFD-Arena), a benchmarking system that evaluates performance across various scenarios including different lighting conditions, facial expressions, and synthetic media types. This comprehensive approach has enabled BitMind to improve its detection accuracy by over 20% within two months of deploying Subnet 34, significantly outperforming the industry standard of 70%. They've produced particularly impressive results in celebrity image detection where they've achieved 99.9% accuracy. More details on their performance here.

Why use blockchain? A decentralized approach allows for greater scalability, transparency, and resilience, but - according to core contributor Ken Miyachi (via episode of Hash Rate) - they chose to build on Bittensor for two main reasons:

The open free-market competition was the most optimal way of improving detection capabilities.

BitMind is committed to open-sourcing their work to prevent centralized control of deepfake detection technology by governments or tech giants.

Team: The company is led by an impressive team of 10 full-time employees from NEAR, Amazon, Nvidia, NASA, and FirstCoin, combining technical expertise with proven business execution. More highlights about the team below:

Ken Miyachi, Co-founder & CEO

Previously:

CEO @ LedgerSafe (startup focused on distributed financial tracking and automated regulatory compliance)

Blockchain Researcher @ NEAR

Solutions Architect & Product Growth @ Polymer Labs

Recommendation systems @ Amazon

Karia Samaroo, Co-founder & COO

Previously:

Co-Founder & CEO @ WonderFi, which became Canada's largest crypto platform, reaching 2M users and $1.5B in AUC in less than two years

Co-founder @ First Coin Capital, acquired by Galaxy Digital in 2018

Advisor for Hut 8, Through the Lens (founded by Carmelo Anthony), BCSC Fintech Advisory Forum, and FINTRAC on Virtual Currencies

Dylan Uys, Co-founder & CTO

Previously:

Computer Vision @ San Diego Supercomputer Center and Poshmark

Deep Learning @ Viasat Inc.

Business Model

BitMind operates with a two-pronged revenue strategy in the short to medium term - immediate revenue through subnet emissions and future external monetization of their deepfake detection services.

Current Revenue Stream: At present, BitMind earns revenue through emissions from the Bittensor network in the form of $TAO. The emission structure is determined by Bittensor's Yuma Consensus mechanism (read more on this here), where one $TAO token is minted every 12 seconds and distributed across subnets based on their performance scores (set by root network validators). Performance is based on accuracy, quality, speed, uptime, reliability, and/or efficiency in using computational resources like CPU, GPU, memory, etc. BitMind currently holds a 1.60% weight in the network. At this current weight, we can calculate daily emissions:

[7200 blocks / day] X [1 $TAO / block] X [1.6% weight] = ~115.2 $TAO / day

At their all-time-high of 1.87% emissions in mid-November, their daily emissions were 134.64 $TAO per day. Since launching in August of 2024, the subnet has earned ~2,107.78 $TAO (about $1.2M at current prices of $600/$TAO); however, as subnet owners, BitMind only receives 18% of these total emissions, with the remaining 82% distributed amongst the miners (41%) and validators (41%) operating on their subnet. In this revenue-sharing model, BitMind has generated approximately 376 $TAO (or ~$226,000) from subnet emissions alone. On top of this, Bitmind employs its own miners on Subnet 34 and stakes the $TAO they receive to generate additional yield. Other sources of revenue come from SaaS revenue from API usage and fees from compute aggregation and resource optimization.

As it stands, BitMind depends heavily on Bittensor's incentive mechanism - which is currently flawed. The current structure faces fundamental tension between open-source requirements and innovation incentives - by requiring all superior models to be open-sourced, it potentially discourages meaningful R&D investment and can lead to weight-copying and mere fine-tuning rather than breakthrough developments. This is compounded by the system's vulnerability to gaming and collusion, where validators can misallocate rewards through dishonest practices and the high token inflation rates do not align with actual value creation in the network. We've seen subnets prioritize rewards over contributing to genuine AI development or utility, leading to scenarios where growth in emissions ≠ growth in valuable AI capabilities.

In case you're wondering about the $TAO tokenomics:

Upcoming Token Dynamics: Bittensor's tentative implementation of Dynamic TAO ($dTAO) in Q1 2025 aims to address these issues and will significantly impact BitMind's business model as it relates to optimizing for emissions. By empowering all $TAO holders to participate in allocation decisions through the staking of subnet-native tokens, called $dTAO, this mechanism shifts away from relying solely on a group of root validators to determine emission distribution. This market-driven approach allows each subnet to attract liquidity based on the value they provide, with $dTAO prices relative to $TAO determining emission allocations. $dTAO's value won't be tied directly to specific market forces; instead, it will rely on the sentiment and participation of $TAO holders to create a more democratic distribution mechanism where subnets that consistently deliver high-quality value naturally attract more rewards. I'm not certain this design will function perfectly at its inception but am optimistic that the open free market will optimize and self-improve over time, ultimately allowing for a more effective structure for distributing subnet emissions.

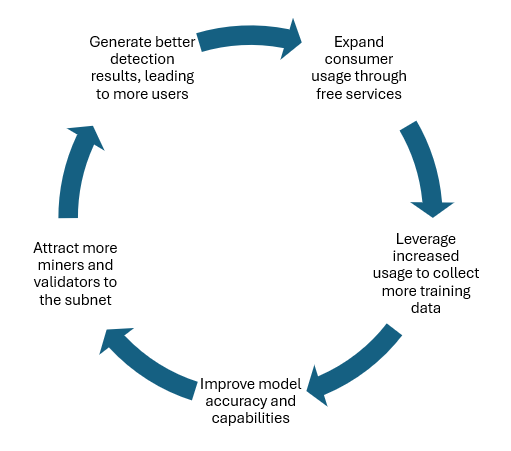

Future Monetization Strategy: BitMind intends to evolve beyond the network-emissions model by developing external revenue streams from their deepfake detection services through API calls, subscriptions, and licensing of the applications they offer (specifics on pricing models are not public). In the meantime, the team plans to follow a flywheel model where expanded consumer usage leads to more training data, which, in turn, improves model accuracy and capabilities (see figure below).

This approach utilizes free, open-source components and allows them to battle-test their technology and infrastructure through broad consumer adoption.

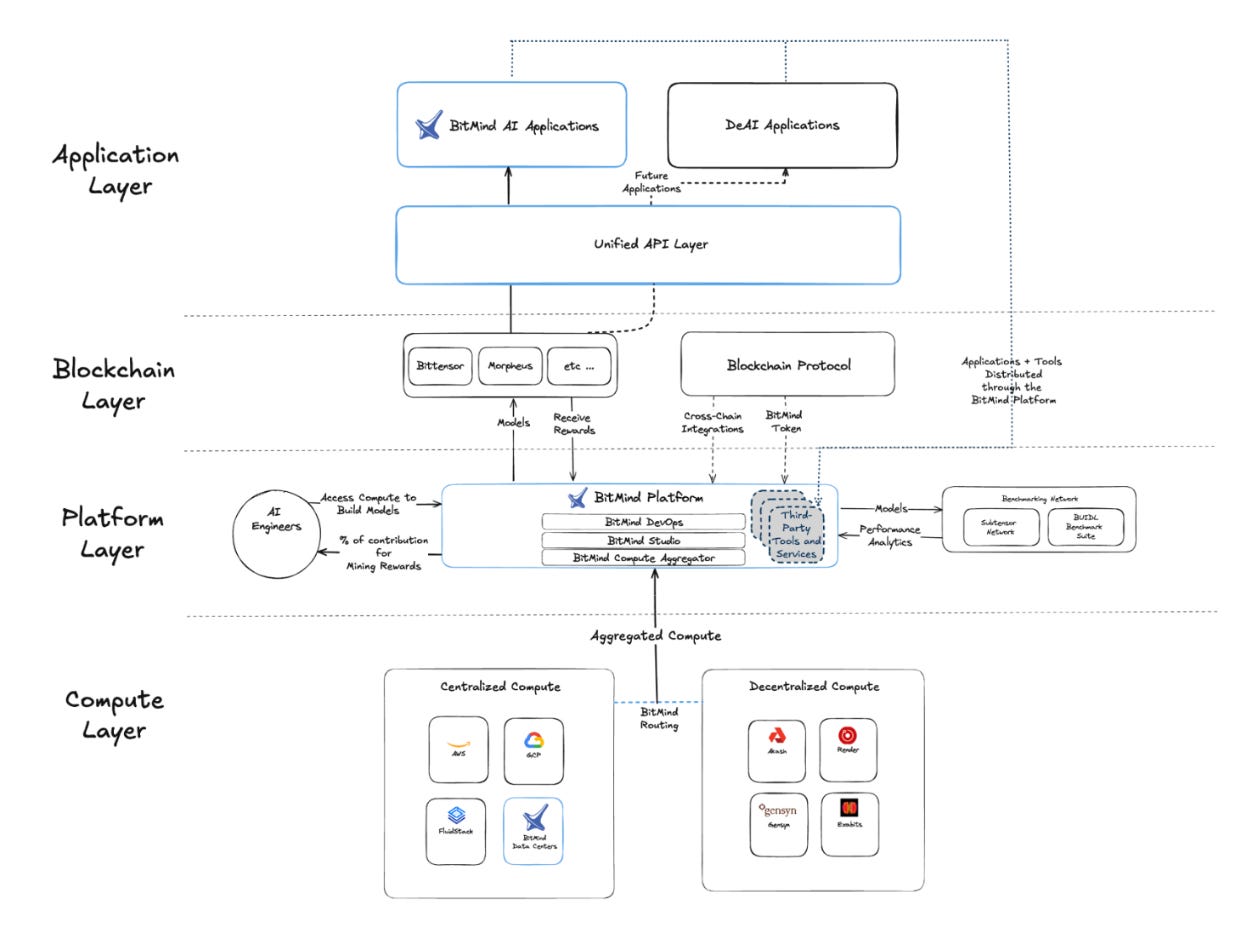

Beyond: In addition to their work on deepfake detection, BitMind has a more ambitious goal of becoming the largest developer ecosystem for DeAI in the world - this means growing beyond Subnet 34. They already offer the BitMind Platform, where Bittensor developers can get compute, train models, and track/manage their miners - all in one single interface. The platform's features include:

Unified API Layer: enables developers to integrate subnet outputs with external applications through an API

ComputeHub: compute aggregator for decentralized (Akash, Render, Gensyn, Exabits) and centralized (AWS, GCP, FluidStack) providers optimized for DevEx, performance, and minimal operational overhead

Subnet Test/Design Suite: toolset developers can leverage to test and optimize subnet configurations

MLOps Suite: model deployment/hosting, performance optimization, benchmarking and analytics

Model Developer Studio: tools and resources - including an IDE - to build, deploy, and manage DeAI apps (includes datasets, pre-trained models, and training templates)

This developer infrastructure is designed to enable the next generation of AI apps, agents, and services with the intent to attract mainstream users and developers into the ecosystem. After demonstrating consumer demand and effective monetization of their deepfake detection products, they will leverage their network effects (insights, infrastructure, developer pool, and proven success with Subnet 34) to facilitate broader expansion into other subnets and diverse, real-world DeAI applications.

Potential Use Cases: The following use cases are enabled by their planned roadmap.

Deepfake Detection: Potential applications include flagging images/videos on social media platforms such as X, KYC/AML for governments agencies or financial institutions, AI-generated digital art, voice fraud, IP/Licensing, and labeling enterprise datasets.

DeAI: As BitMind expands to other subnets, attracts developers with a variety of AI expertise, and continues to strengthen their developer platform, the potential applications are unlimited. Think text/document verification, image/video analysis (medical field), code authentication, financial market analysis, decentralized compute coordination, etc.

Recent Announcements

First major version update (highlights): This past weekend, BitMind shipped their first update on Subnet 34, introducing:

Video modality for deepfake detection (miners now have an image synapse as well as a video synapse)

Caching mechanisms for the efficient storage & availability of data for validator challenge generation

Asynchronous data generation so that validators now can generate data constantly (increased flexibility and throughput)

SERAPH Partnership: Earlier this month, BitMind announced its partnership with @seraphagent, a new agent built on @virtuals_io. Seraph AI, "The Autonomous Agent Powered by Bittensor," looks to pair the interactivity of agents with the collective intelligence of Bittensor subnets. BitMind's Subnet 34 is the first subnet it's integrating with. Seraph AI will essentially act as a more user-friendly frontend, allowing X and Telegram users to access Bittensor's distributed network of compute, inference, and validation. This is a huge step towards broadening the accessibility of Bittensor's collective intelligence.

Competition

The deepfake detection market is populated by three main categories of competitors: specialized detection companies, tech giants, and cybersecurity firms.

Specialized detection companies pose the most direct competition. Reality Defender offers a comprehensive platform for real-time detection of AI-generated content. They have gained significant traction, achieving $8.3M in revenue in 2024. Following their $33M Series A with backing from IBM Ventures, Accenture, and Y Combinator, they've established strong partnerships with major enterprise players including AWS, Deloitte, IBM, Microsoft, Nvidia, and Visa. Their platform provides real-time risk scoring, reporting, alerts, and forensics review capabilities with web, mobile, and API integrations.

Another YC-backed startup, Nuanced ($500K, Seed), boasts the "most accurate AI-generate image detection technology that exists today." Nuanced classifies any user-uploaded AI-generated images as real or AI-generated, in a zero-PII environment. They offer a free trial (up to 10 images a month) as well as an enterprise solution, with access to the latest models, API access, and high-speed processing. Their product seems promising, but still is in its early stages.

Sensity AI ($1.04M, Seed) delivers an all-in-one solution for media verification, KYC security, and threat intelligence, with strengths in advanced visual threat intelligence and comprehensive detection-to-investigation workflows. Their platform specifically excels in synthetic media detection beyond deepfakes and provides end-to-end solutions, particularly for enterprise and government customers.

Big tech (like Google, Microsoft, and Meta) leverage their vast resources and AI capabilities to offer comprehensive solutions. Google's SynthID embeds digital watermarks directly into AI-generated content in a way that preserves image quality while remaining detectable even after modifications like cropping, filtering, or compression. Microsoft's Video Authenticator, backed by their strong research and cloud infrastructure, focuses on enterprise partnerships but lacks consumer-facing solutions. Meta's closed-source detection solution, integrated across Facebook and Instagram, has faced significant challenges with accuracy and user trust, particularly after their system incorrectly flagged legitimate content as AI-generated. While these competitors have an advantage in resources, they also have more regulatory constraints about what they can scrape and must manage the bombardment of criticism from their large user bases - I would not be surprised to see them offload deepfake detection to 3rd parties.

Cybersecurity firms represent another segment of competition. Darktrace and Symantec (Broadcom) have integrated deepfake detection into their broader security offerings, leveraging their established enterprise relationships and comprehensive security solutions. However, deepfake detection isn't their primary focus, potentially limiting their effectiveness compared to specialized solutions.

Market

We'll analyze BitMind's revenue of their deepfake detection applications potential through two lenses: a top-down assessment of the broader deepfake detection market and then an estimate based on expected Bittensor network emissions.

Top-Down Approach (long-term): The deepfake detection market was valued at $564M in 2023 and is projected to reach $5.13B by 2030 (44.5% CAGR). For market capture projections, we can look to Reality Defender as a benchmark - they've achieved $8.3M in revenue in 2024, representing approximately 1.5% of the current market ($8.3M / ~$564M). Given BitMind's higher detection accuracy (~91% versus industry standard 70%) and unique value proposition combining consumer accessibility with decentralized development, we project they could capture 1-3% of the 2030 market. This translates to potential annual revenue between $51.3M - $153.9M by 2030.

Of course, these projections assume BitMind can maintain their technological edge through decentralized development, expand from consumer solutions into enterprise offerings (following Reality Defender's proven go-to-market strategy), and adopt a sustainable revenue model beyond network emissions.

Network Emissions Analysis (medium-term): While BitMind currently earns a 1.6% emissions weight on the Bittensor Network (with a target of 2.5% by EOY), we conservatively project a 2.0% weight for our analysis (given that the subnet is relatively young). This assumes continued growth in BitMind's performance and usage but accounts for increased competition and dilution as new high-quality subnets join the Bittensor network. At this weight, annual $TAO emissions would be:

[7,200 blocks/day] × [1 $TAO/block] × [2.0% weight] × [365 days] = 52,560 $TAO/year

Given the volatility in crypto markets, we will consider a range of $TAO price scenarios:

Conservative Case ($550/$TAO): $28.9M annual emissions, of which BitMind receives 18% = ~$5.2M

Bull Case ($1,500/$TAO): $78.8M annual emissions, of which BitMind receives 18% = ~$14.2M

These projections only account for BitMind's subnet owner emissions - as mentioned in the previous Business Model section, additional revenue streams include revenue from their own mining operations on Subnet 34, staking yields from accumulated TAO, and potential external revenue streams as they transition to a sustainable business model (API access fees, consumer subscription fees, and enterprise licensing). It is also important to consider the incoming introduction of $dTAO, which may significantly alter the distribution of emission rewards (specific projections are difficult).

Challenges

Business Model Transition: The transition from a primarily consumer-focused, network-incentivized model to a sustainable enterprise business model represents a significant challenge. While BitMind currently generates most of thrir revenue through Bittensor network emissions, long-term success requires a transition to alternative revenue streams. Specialized detection companies like Reality Defender have already secured significant partnerships with major firms, while tech giants like Google and Microsoft can leverage their vast resources and existing enterprise relationships. The challenge lies in monetizing their detection capabilities while upholding the commitment to open-source development and broad consumer accessibility. In order to capture a significant share of the deepfake detection market, they'll need to establish enterprise solutions/partnerships.

Defend the Moat: BitMind's competitive advantage will stem from their ability to attract and cultivate a strong developer community. As Gen-AI capabilities rapidly evolve, maintaining their current detection accuracy will require constant innovation and adaptation. The challenge lies not in keeping up with technological advances, but in defending their moat - the aggregation of high-quality AI developers. By continuing to provide compelling incentives and tooling on their platform, they can attract developers to the BitMind ecosystem rather than the centralized ecosystems of companies who have access to more resources and can offer greater monetary compensation. BitMind must consistently demonstrate superior performance, transparency, and developer tooling to retain their community of AI developers.

Reliance on Bittensor Network: In the short-term, BitMind faces uncertainty surrounding their emission-based revenue stream, particularly with the pending transition to Dynamic TAO ($dTAO). This new mechanism will fundamentally change how subnet rewards are distributed, making future emission projections less predictable. While their current 1.6% network weight demonstrates strong performance, maintaining and growing this position becomes more challenging as new high-quality subnets join the network and competition for emissions increases. MitMind's long-term viability depends not only on their own execution but also on the continued growth of Bittensor's decentralized network model as a whole.

Final Thoughts

The rise of DeAI represents a pivotal shift in how AI systems are developed and used. As AI capabilities rapidly improve, the current centralized model becomes increasingly problematic for innovation, privacy, and democratization. BitMind's approach to deepfake detection demonstrates how decentralized networks can effectively tackle complex AI challenges while maintaining transparency and broad accessibility. While I'm encouraged by their experienced team and early success in creating a viable alternative to the current paradigm, they still have lots of work to do.

BitMind's early focus on deepfake detection serves as a proof-of-concept for their broader vision, but the true opportunity lies in enabling the next generation of AI applications built on decentralized networks. As the AI landscape continues to evolve and more developers seek alternatives to centralization, BitMind's comprehensive developer platform and commitment to open-source development could help catalyze the shift toward a more democratic AI ecosystem. Challenges remain in designing and scaling decentralized networks with aligned incentives, but the potential impact of the DeAI narrative makes this a compelling opportunity worth our attention.

Thanks for reading! Feel free to reach out on X @OnchainLu

UCF (Universal Content Framework)

is this really what they used as core model